A “natural” voice AI experience means responses arrive within the timing, rhythm, and continuity that human conversation expects.

When people ask why voice AI has latency and realism issues, the common assumption is that models are not advanced enough.

That assumption is wrong.

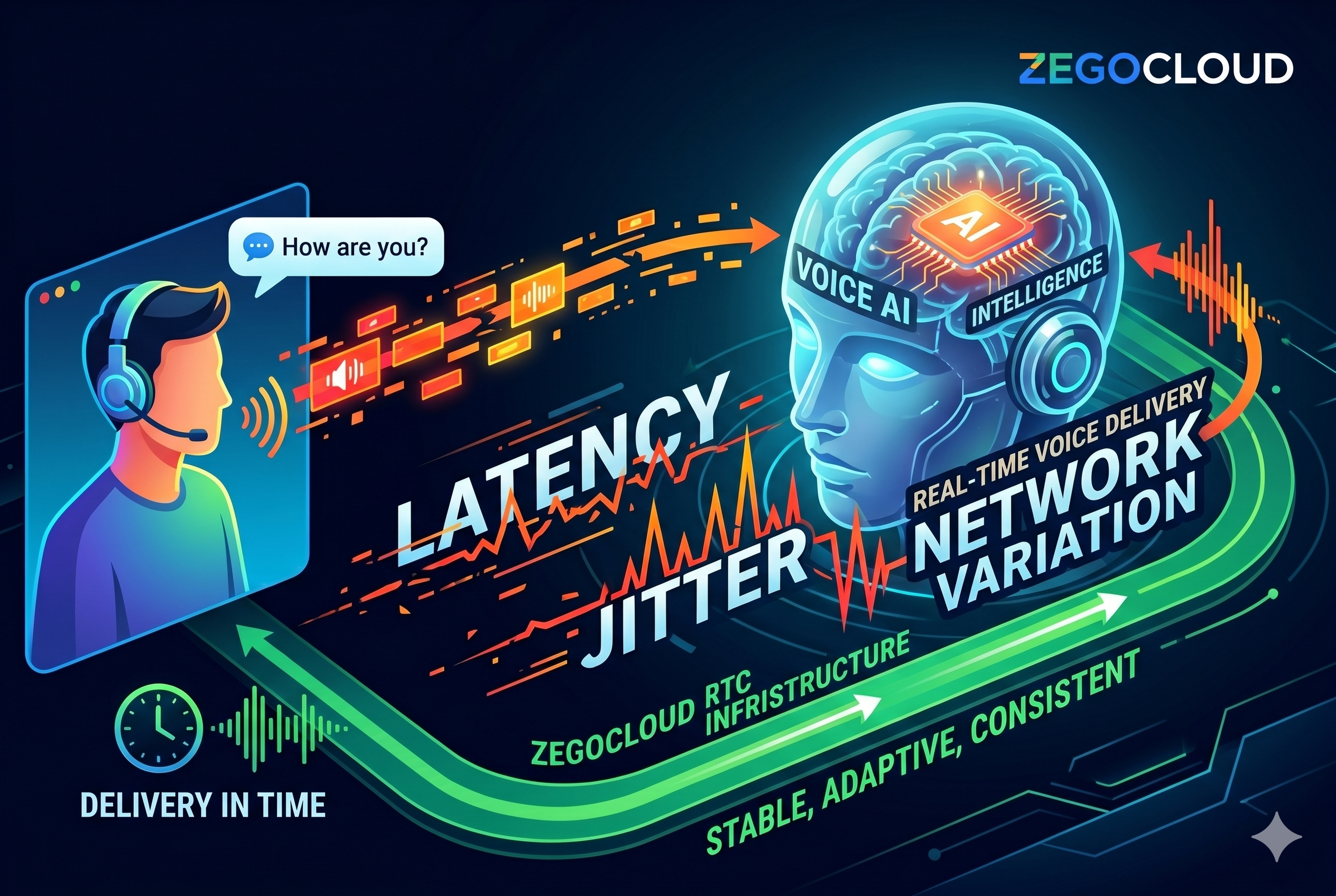

Voice AI feels unnatural primarily due to latency, jitter, and packet loss—not model quality. Even the most advanced AI systems cannot deliver human-like conversations if responses arrive too late, inconsistently, or with missing audio data.

In real-time communication, timing is part of intelligence.

Looking Beyond the Model for Voice AI Latency and Realism Issues

To truly understand why voice AI has latency and realism issues, you need to look beyond models and examine the full delivery pipeline.

We can break this into a 4-layer system:

| Layer | What It Does | Failure Mode | Key Metric |

| Model Layer | Generates and understands speech (LLM, ASR, TTS) | Robotic tone, poor comprehension | Accuracy, naturalness |

| Interaction Layer | Controls conversational timing | Awkward pauses, interruptions | End-to-end latency |

| Network Layer | Transports audio data in real time | Choppy audio, broken rhythm | Jitter, packet loss |

| Infrastructure Layer | Optimizes global delivery paths | Inconsistent experience across regions | Routing efficiency, QoS |

Most teams invest heavily in the first layer.

But users experience failures in the other three.

Where Conversation Actually Breaks

Human conversation operates within strict timing constraints:

- <200 ms latency → Feels natural

- 200–300 ms → Noticeable delay

- >300 ms → Breaks conversational flow

At the same time:

- Jitter > 30 ms → disrupts speech rhythm

- Packet loss > 1–2% → reduces intelligibility

These thresholds explain why voice AI has latency and realism issues in real-world environments, even when it performs well in controlled demos.

Why Better Models Don’t Solve It

It’s tempting to assume that more advanced AI will fix the problem.

But models don’t control delivery.

They can generate perfect responses—linguistically correct, contextually aware, even emotionally nuanced.

Yet if that response:

- arrives too late

- overlaps awkwardly

- or breaks mid-sentence

…the experience still fails.

This is the core disconnect:

Models optimize what to say. Real-time systems determine whether it feels natural.

What Actually Fixes It

Fixing this doesn’t come from pushing models further.

It comes from rethinking the system around interaction quality:

- Managing end-to-end latency, not just inference speed

- Adapting to unstable networks in real time

- Keeping audio streams continuous, even under packet loss

- Ensuring consistent performance across geographies

In other words, shifting from intelligence-first design to interaction-first design.

What actually Makes Voice AI Feel Natural

To solve why voice AI has latency and realism issues, we need to move beyond model optimization and focus on system behavior.

A natural voice AI experience requires:

1. Stable, not just low latency

Human perception is sensitive to rhythm. Stability matters more than peak performance.

2. Real-time adaptation to network conditions

The system must adjust instantly when conditions change—without interrupting the conversation.

3. Loss-tolerant audio delivery

Missing or delayed packets should not break conversational flow.

4. Continuous interaction design

Voice AI must support interruption, overlap, and natural conversational dynamics.

Where Real-time Infrastructure Becomes The Deciding Factor

This is where real-time infrastructure becomes the deciding factor.

To maintain natural conversation, a system must do more than deliver responses quickly—it must deliver them consistently under changing network conditions. That requires continuous optimization across the entire delivery path.

Platforms like ZEGOCLOUD address this by operating directly at the interaction layer, where timing and stability are determined. Specifically, they:

- Route traffic dynamically to avoid congestion and unstable paths

- Adjust bitrate in real time to match current network conditions

- Compensate for packet loss to preserve audio continuity

- Leverage a globally distributed network to reduce latency across regions

Together, these mechanisms don’t just improve speed—they stabilize the delivery of each response.

And in voice AI, stability is what preserves conversational rhythm—making interactions feel immediate, continuous, and ultimately, human.

Conclusion

Voice AI doesn’t fail because it lacks intelligence.

It fails when timing breaks the illusion of conversation.

Latency, jitter, and network variability introduce distortions that no model alone can fix.

Solving this requires a shift in perspective—from optimizing intelligence to optimizing interaction.

ZEGOCLOUD reflect this shift by focusing on the infrastructure layer that ensures real-time communication remains stable, adaptive, and consistent—even in unpredictable global environments.

Because in the end, realism in voice AI is not just about what is said. It is about whether it arrives in time to feel human.

Let’s Build APP Together

Start building with real-time video, voice & chat SDK for apps today!