If you’re trying to build a live streaming platform, you’ll quickly run into three core challenges:

- How do you scale to millions of viewers?

- How do you enable real-time interaction without latency?

- How do you support recording and replay reliably?

Most tutorials don’t answer these questions.

Because getting a stream online is easy. Scaling it into a real product is not.

Why Building a Live Streaming Platform Is Harder Than It Looks

Most teams feel confident they know how to build a live streaming platform—right up until they try to scale it.

The first version usually goes smoothly. You set up a simple pipeline, run a test stream, maybe invite a few users to watch in real time. Everything works. It feels like you’re done.

But that confidence doesn’t last long.

The moment you move beyond the demo, things start to break. Chat messages arrive out of sync. As more users join, latency creeps in. You turn on recording—and discover parts of the session are missing.

At that moment, it becomes clear: Building a live streaming feature is easy—building a live streaming platform is not.

The Shift: From Video Streaming to Real-Time Interaction

Live streaming used to be a one-way experience.

Now it’s something else entirely.

Users don’t just watch—they comment in real time, join as guests and send reactions that influence what happens next.

So when you build a live streaming platform today, you’re not just delivering video. You’re creating a real-time interactive environment.

And that introduces a fundamental challenge: How do you scale video delivery while keeping interaction truly real-time?

Why Most Live Streaming Architectures Fail at Scale

At some point, every team faces the same architectural dilemma.

You start with CDN-based streaming because it scales beautifully. Thousands—even millions—of viewers can watch smoothly.

But then interaction breaks.

- Chat feels ahead of the video

- Guest speakers lag

- Real-time engagement disappears

So you switch to WebRTC for low latency. Now interaction feels instant—but scaling becomes difficult and expensive.

This tradeoff is not accidental. It’s structural.

The Core Tradeoff: CDN vs WebRTC

| Capability | CDN Streaming (HLS) | WebRTC |

| Latency | High (5–10s) | Ultra-low (<300ms) |

| Scalability | Extremely high | Limited |

| Interaction | Poor | Excellent |

| Cost at scale | Efficient | Expensive |

Neither approach alone is enough.

How to Choose the Right Architecture to Build a Live Streaming Platform

The platforms that scale don’t choose between CDN and WebRTC.

They combine them.

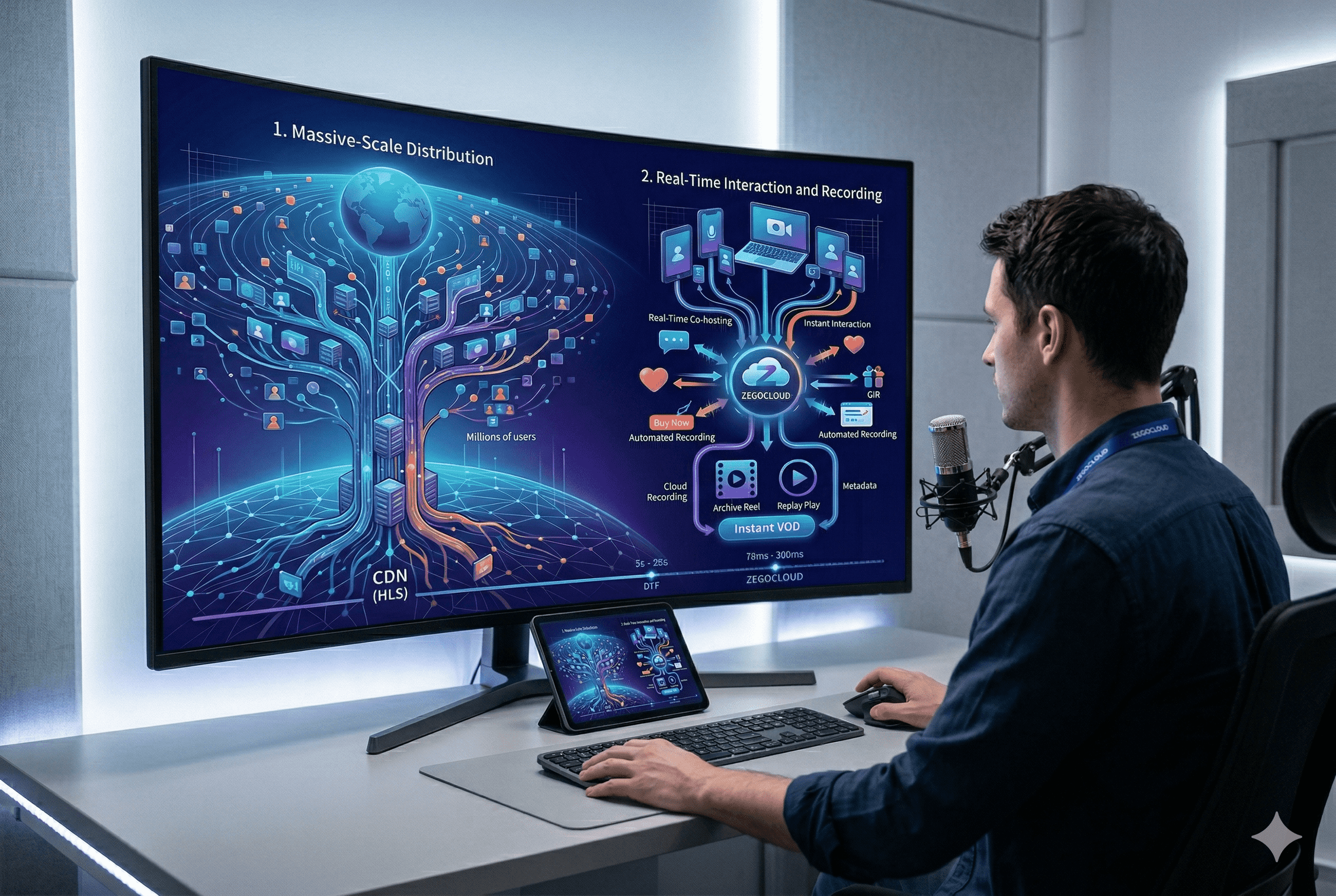

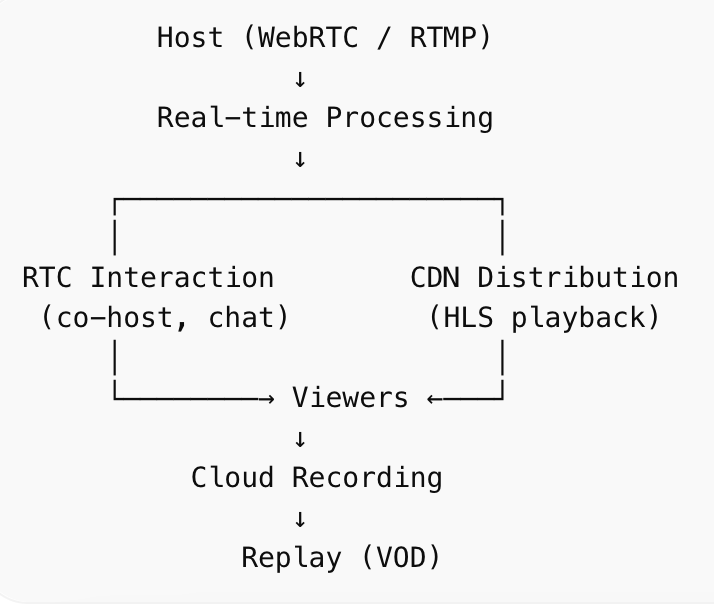

Here’s what a modern architecture looks like when you build a live streaming platform for scale and interaction:

This hybrid model solves the core tension:

- RTC handles real-time interaction

- CDN handles large-scale delivery

This is exactly where most teams hit a wall—and why solutions like ZEGOCLOUD exist.

Instead of stitching together RTC, CDN, messaging, and recording manually, ZEGOCLOUD unifies these into a single real-time infrastructure layer—removing the need for cross-system synchronization.

Why Architecture Matters

Here’s where theory meets reality.

At scale, the problem is no longer “streaming”—it’s distribution physics.

Let’s say:

- One stream = 2 Mbps

- 1 million concurrent viewers

That equals 2 Tbps of outbound bandwidth.

No single server—or even cluster—can handle that directly.

This is why:

- Pure WebRTC architectures collapse under scale

- CDN fan-out becomes mandatory

But CDNs introduce latency.

So the only viable architecture is:

- RTC for a small number of participants (hosts, guests)

- CDN for massive audience distribution

Platforms like ZEGOCLOUD abstract this complexity by combining global edge networks with real-time communication infrastructure—so developers don’t have to solve bandwidth distribution themselves.

How to Design Real-Time Interaction in a Live Streaming Platform

Once the architecture is in place, the next layer is interaction.

This is where many platforms fail—not because they lack features, but because they lack synchronization.

Interaction Is a Timing Problem, Not a UI Problem

When you build a live streaming platform, interaction introduces a second real-time system alongside video:

- messaging (chat, reactions)

- user state (join/leave, role switching)

- co-host audio/video streams

Each of these must align with the same timeline as the video stream.

If they don’t:

- chat appears ahead of the video

- reactions feel disconnected

- co-host conversations become unnatural

Why Most Implementations Break

A common architecture looks like this:

- Video → CDN (HLS, delayed)

- Chat → WebSocket (real-time)

These systems operate independently, which creates unavoidable mismatch:

| Layer | Latency | Result |

| Video (CDN) | 5–10s | Buffered playback |

| Chat (WebSocket) | <500ms | Instant |

That gap is what users feel as “laggy interaction.”

How Modern Platforms Fix This

To fix this, interaction must be embedded into the streaming system, not layered on top.

This typically requires:

- shared timestamps across media and messages

- synchronized delivery pipelines

- RTC-based communication for participants

This is exactly why platforms like ZEGOCLOUD integrate messaging, co-hosting, and streaming into one real-time stack—ensuring interaction stays aligned without manual synchronization.

How to Build Recording and Replay into Your Live Streaming Platform

After interaction is working, the next system you need to design is recording.

Because when you build a live streaming platform, the live moment is only part of the value—the rest comes from what happens after.

Recording Is Not Just Storage — It’s a System

At a technical level, recording means capturing a continuous, real-time media stream under unstable conditions:

- network fluctuations

- packet loss

- bitrate changes

- multiple participants

If handled incorrectly, this leads to:

- missing segments

- desynchronized audio/video

- unusable recordings

The Three Recording Approaches

| Approach | Risk | Scalability | Verdict |

| Client-side recording | Very high | Very low | ❌ Not viable |

| Single-node recording | Medium | Limited | ⚠️ Risky |

| Cloud/distributed recording | Low | High | ✅ Standard |

How Scalable Recording Systems Work

A production-grade pipeline includes:

- Server-side stream capture

- Segmented recording (small chunks)

- Distributed storage (redundancy)

- Post-processing (stitching + transcoding)

This ensures reliability even under failure conditions.

Platforms like ZEGOCLOUD provide cloud recording integrated directly into the streaming pipeline—eliminating the need to build this system from scratch.

From Recording to Replay: Completing the System

Recording alone is not enough—you need instant usability.

A strong replay system should:

- enable playback immediately after live ends

- support adaptive bitrate

- integrate with your content system

ZEGOCLOUD provides cloud recording integrated with its live streaming pipeline, allowing developers to generate replay content without building separate infrastructure.

Why Replay Drives Long-Term Growth

When replay is built correctly:

- content continues generating traffic

- users engage asynchronously

- monetization extends beyond live sessions

Without it, every stream becomes a one-time event.

When You Should NOT Build a Live Streaming Platform From Scratch

At this point, the real question is no longer how to build—but whether you should.

Build vs Buy Decision Matrix

| Situation | Recommendation |

| MVP / startup stage | Use SDK |

| Need fast global scaling | Use SDK |

| Limited infra team | Use SDK |

| Deep infra expertise + time | Consider building |

| Real-time interaction required | SDK strongly recommended |

The Real Cost of Building

To build a live streaming platform from scratch, you need to solve:

- global media routing

- real-time communication

- synchronization

- recording pipelines

- playback systems

That’s not a feature—it’s an infrastructure company.

The Practical Choice

This is why most teams choose platforms like ZEGOCLOUD.

Instead of spending months building infrastructure, you can:

- launch faster with prebuilt components

- scale globally from day one

- focus on product differentiation

Conclusion

The next generation of platforms will push this even further.

We’re already seeing:

- AI-powered moderators managing live chat

- AI co-hosts joining live sessions

- Real-time translation enabling global participation

When you build a live streaming platform in the coming years, you’re not just building media delivery.

You’re building a real-time, intelligent communication layer.

FAQ

1. What is the best architecture to build a live streaming platform today?

A hybrid architecture combining WebRTC (for interaction) and CDN (for distribution) is the industry standard.

2. How do I reduce latency in live streaming?

Use WebRTC for real-time scenarios, deploy edge nodes globally, and optimize routing and bitrate adaptation.

3. How can I support millions of viewers?

Use server-side cloud recording to ensure reliability, scalability, and automatic replay generation.

4. Should I build or use an SDK?

For most teams, using a solution like ZEGOCLOUD is the fastest and most scalable approach, allowing you to focus on product rather than infrastructure.

5. What is the cost of scaling to 100k+ concurrent viewers?

ZEGOCLOUD offers a transparent pay-as-you-go model. You can start to build a live streaming platform today and get 10,000 free minutes upon signing up, with predictable pricing as your concurrency grows.

6. Can we support “PK Battles” or multi-host scenarios?

Yes. The ZEGOCLOUD Live Streaming SDK natively supports co-hosting and stream-mixing. You can combine multiple host streams into a single output at the edge, reducing the decoding burden on audience devices.

Let’s Build APP Together

Start building with real-time video, voice & chat SDK for apps today!